Why AI Code Audits Fall Short: The Limits of Automated Security Reviews

14 April 2026 • 5 min read

# Why AI Code Audits Fall Short: The Limits of Automated Security Reviews

As artificial intelligence continues to revolutionize software development workflows, many organizations are turning to AI-powered tools like Claude for code analysis and security auditing. While these technologies offer impressive capabilities and can significantly enhance productivity, treating them as complete replacements for professional security audits presents serious risks that could leave your organization vulnerable to sophisticated attacks.

The Appeal of AI-Driven Code Analysis

AI models excel at pattern recognition and can quickly scan large codebases for common vulnerabilities listed in frameworks like the OWASP Top 10. They can identify obvious security flaws such as SQL injection vectors, cross-site scripting (XSS) vulnerabilities, and hardcoded credentials with remarkable speed and accuracy. For development teams under tight deadlines, this capability seems like a perfect solution.

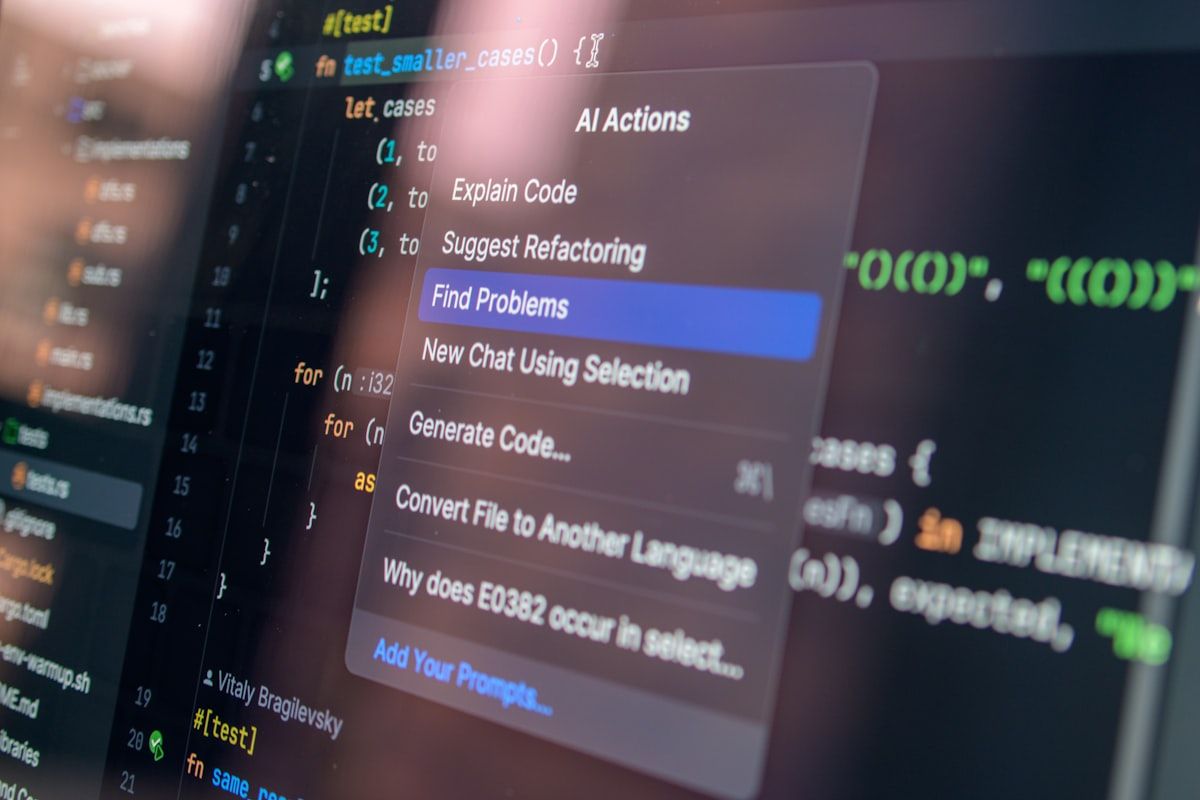

Claude and similar AI tools can process thousands of lines of code in minutes, providing immediate feedback on potential security issues. They're particularly effective at catching low-hanging fruit—the straightforward vulnerabilities that follow predictable patterns. This makes them valuable as first-line screening tools in the development process.

The Critical Gaps in AI Security Analysis

Contextual Understanding Limitations

The most significant limitation of AI-driven code audits lies in their inability to understand business context and application-specific threat models. A professional security auditor doesn't just look at code in isolation; they consider the entire ecosystem, including deployment environments, user access patterns, and organizational risk profiles.

For example, an AI might flag a particular API endpoint as potentially vulnerable to rate limiting attacks without understanding that the endpoint is only accessible within a private network segment protected by additional security controls. Conversely, it might miss a seemingly innocuous function that becomes critical when viewed within the context of the application's authentication flow.

Complex Attack Chain Recognition

Modern cyber attacks rarely rely on single-point vulnerabilities. Instead, they exploit chains of seemingly minor issues that combine to create significant security risks. Professional auditors are trained to identify these attack paths by understanding how different vulnerabilities can be chained together following frameworks like MITRE ATT&CK.

AI tools struggle with this type of analysis because it requires creative thinking and deep understanding of attacker methodologies. While Claude might identify individual components that could be problematic, it lacks the strategic thinking necessary to map out sophisticated attack scenarios that combine multiple vectors.

Framework-Specific Nuances

Different security frameworks and compliance requirements demand specialized knowledge that extends beyond code analysis. Whether you're dealing with PCI DSS for payment processing, HIPAA for healthcare applications, or SOC 2 for service organizations, each framework has specific requirements that must be evaluated within the context of your entire security posture.

A human auditor certified in these frameworks understands not just what the code does, but how it fits within broader compliance requirements. They can assess whether your implementation meets the spirit of the regulation, not just its technical letter.

Real-World Vulnerability Examples

Business Logic Flaws

Consider a financial application where users can transfer funds between accounts. An AI might verify that proper authentication and authorization checks are in place, but it might miss a business logic flaw that allows users to transfer negative amounts, effectively adding money to their accounts. This type of vulnerability requires understanding both the code and the business rules it's meant to enforce.

Race Condition Vulnerabilities

Race conditions represent another category where AI tools often fall short. These vulnerabilities occur when the timing of operations affects the security of the system. A professional auditor might identify potential race conditions by understanding the application's threading model and deployment architecture—context that's often not available in the code itself.

Zero-Day and Emerging Threats

AI models are trained on historical data and known vulnerability patterns. This makes them inherently reactive rather than proactive. Professional security auditors stay current with emerging threat intelligence, zero-day vulnerabilities, and novel attack techniques that haven't yet been incorporated into AI training datasets.

The Human Element in Security

Threat Modeling Expertise

Effective security auditing begins with comprehensive threat modeling—a process that requires deep understanding of your specific use case, user base, and potential attackers. Professional auditors bring experience from numerous engagements, allowing them to identify threats that are specific to your industry or application type.

Penetration Testing Integration

Code review is just one component of a comprehensive security assessment. Professional auditors can integrate static code analysis with dynamic testing techniques, attempting to exploit identified vulnerabilities in controlled environments. This practical validation is something AI cannot provide.

Risk Prioritization

Not all vulnerabilities are created equal. Professional auditors can help prioritize remediation efforts based on actual risk to your organization, considering factors like exploitability, business impact, and available mitigations. AI tools tend to treat all identified issues with equal urgency, potentially overwhelming development teams with low-priority items while critical issues get lost in the noise.

Best Practices for Combining AI and Human Expertise

The most effective approach combines AI efficiency with human expertise. Use AI tools like Claude for initial screening and to catch obvious vulnerabilities early in the development process. However, supplement this with regular professional security audits that provide the contextual analysis and strategic thinking that AI cannot deliver.

Consider implementing a layered approach where AI handles continuous monitoring and basic vulnerability detection, while human auditors focus on complex analysis, threat modeling, and business-critical assessments. This combination maximizes both efficiency and security effectiveness.

Conclusion

While AI tools represent a significant advancement in automated code analysis, they cannot replace the contextual understanding, creative thinking, and domain expertise that professional security auditors provide. Organizations that rely solely on AI for security auditing may find themselves with a false sense of security, missing critical vulnerabilities that could lead to significant breaches.

The future of cybersecurity lies not in choosing between AI and human expertise, but in thoughtfully combining both to create comprehensive, effective security programs that protect against the full spectrum of modern threats.